Welcome to the public cloud/s. Long gone are the days where traditional Email DLP, URL proxy filtering and L3 firewalls help you mitigate data loss to malicious websites.

Maybe you have a developer who wants to use some benign and non threatening Google API to read non sensitive data. Seems okay right? Well, I wouldn’t be writing this article to update the Cyber-Security community if everything was “all good” would I?

Here’s a typical IT Industry problem ….

Developer: “I need to allow network access to our GSUITE APIs so we can do regular IT operations and monitoring of non sensitive GSUITE meta-data”

Security: “….ugh”

As a disclaimer, all this research was done in my private and personal Lab environment. Please keep in mind, the rest of this write-up can be used by people with both good and bad intentions. The problem is already out there across the Industry and this isn’t some “vulnerability” patch that can be responsibly disclosed. So, if your upset I’m shining a light in the dark, you’re welcome to stay blissfully ignorant and stumble.

What’s the scoop?

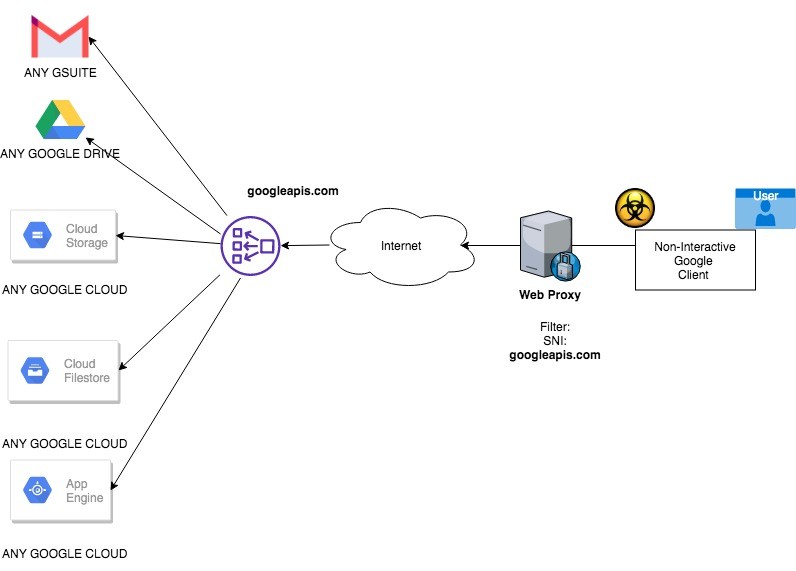

- When a developer uses the Google SDKs, the SDK is natively programmed to “call-home” back to Google or the Public Cloud right? Correct.

What’s the vulnerability?

- Google SDKs “typically” but “not always” call back to a Transport Layer Security TLS) server name indication (SNI) googleapi.com… similar for AWS

- The specific “API” being used is hidden within this TLS connection to googleapis.com

- The specific Google “Account” or “user” being used is also hidden inside an authorization token

What’s the impact?

- Once you Allow googleapi.com you are allowing ALL outbound connections to ALL googleapi.com API’s to WHOEVER’s GSUITE and/or Google Cloud Platform Account …..

- Basically, you opened the front-door to everything and you didn’t even know it.

What’s the Risk?

- That depends, if you have a multi-tenant data storage solution which support multiple lines of business, then the decision to allow that server to connect to googleapi.com for one line-of-business puts everyone else’s data in danger of data exfiltration.

Don’t believe me, show you the PoC? Okay..

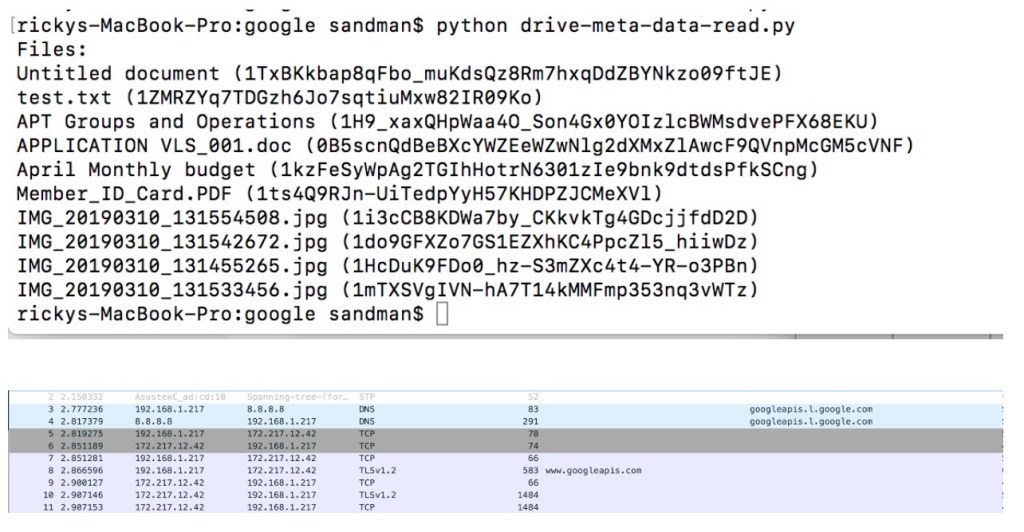

Here is a simple Google Cloud SampleProject talking to Google for GSUITE DRIVE access.

If you wan’t to replicate the test, it’s easy enough to just use Googles free and public source code and build a sample project… they give you everything you need here

https://developers.google.com/drive/api/v3/about-sdk

What does that mean? The results here confirm that even though your developers only want to “USE GSUITE DRIVE” they need broad access to all of Google APIs. Oh and this is generally true for AWS as well. When you think about this, that is great for the Public Cloud provider because now your Developers are one-step closer to using all the “cool stuff” on the Public Cloud.

Simple Example

What can you do about it?

That depends if you’re on the PROTECT or DETECT side. Take these things into consideration if you’re back is against the wall in the Industry

PROTECT

- DON’T ALLOW network access from a proxy that has BROAD Desktop/Server IP range sources allowed (Typically the case for Desktop Browser Proxies)

- DO create a dedicated GCP PROXY which permits only sources from approved SERVER/JUMP BOX/VDI

- DON’T ALLOW network access from system/s or system ranges that support Multi-Tenant data storage solution\s

- DO create HOST ACLs and/or virtual “Security Groups” which restrict’s EGRESS to the dedicated GCP Proxy

- DON’T think that basic URL filtering will provide any real security value

- DON’T think that because your Google API’s are disable that it magically protects you from “Malware” using the attacker’s enabled Google APIs and not yours

- DO enable strict permissions on the Google / AWS SDK executables

- DO enable strict permissions on the Google / AWS SDK service accounts secrets (Google service accounts uses token.pickle)

DETECT

- DON’T think that you can inspect the traffic at the proxy (It always resolves to the top level domain and the traffic is encrypted using TLS from a non-browser client)

- DON’T let just anyone search and detect Google SDK secrets for DLP. Only allow your CIRT team to perform these functions

- DO monitor for network calls from Network/s where Internet Connections are not allowed AND/OR monitor those multi-tenant data storage systems (Hadoop/Terradata) when they make calls to Google API’s

- DO develop host based DLP forensics tools that look for the Google SDK token AND parse for unauthorized accounts/ user domains

On the last note, host based forensics tool/s, I spent some time playing around doing a PoC just for fun. Wanted to share what I’ve learned.

The google SDK’s that act as a “non-interactive” service typically uses service account credentials. Typically this follows the Oauth 2.0 flow for service accounts where a Client ID and Client Secret are passed to the Oauth authorization service and in return an Oauth 2.0 authorization token is issues to the client SDK. Google stores the initial client credential as a credentials.json then after successful authN occurs creates a local configuration file in a token.pickle.

My initial thought was to develop a piece of code that helps me find and detect malware using unauthorized Google accounts by parsing the tokens on the local machine. The pseudo code looked like this.

UPDATE – SEE THIS ARTICLE WHERE IMPLEMENTED THIS MALWARE

- Determine if Google SDK is installed

- Find any Google credential.json or token.pickle service account secrets to determine if there

- Parse the token.pickle JSON for anything useful (BTW, the ClientID here could be mapped against your Organizations known ClientID to determine if the credential is an approved credential tied to your GCP account)

- Unpickle the token.pickle to see if I can find the “Authorization token” storage location (BTW, I think this Oauth token is only stored in memory, this is important because it has the details we need to validate whether this is an unauthorized account/domain)

Determine if Google SDK is running is easy enough in a script is easy enough

locate gcloud gcloud version

From there we want to find the credential.json

find . > find / -xdev -name credentials.json 2> >(grep -v 'Permission denied' >&2) >> googleTokens.txt

From there we want to parse the credential for anything useful that could be used to validate whether this is a “legitimate credential from my own GCP account”

import os import sys import json import base64 findToken = '/bin/bash IoCgoogleDataExfil.sh' # os.system(findToken) filepath = './googleTokens.txt' with open(filepath) as fp: for line in fp: with open(line.rstrip()) as json_file: data = json.load(json_file) object = data["installed"] print(object["client_id"])

At this point, I finished the PoC because I needed to focus on my family. Notionally, you’d need a Security Web service that could take the client_id and validate whether it is an ID that has been issued from your Google Account. GCP should offer VIEW/READ type of API call to the credentials API which allows you view and parse the client_id from a list of “approved” issued credentials.

Another idea, I had is to simply find the Oauth 2.0 Authorization token and parse it for the GCP organization being used. If the organization does not map back to my approved Organization then we have a potential data exfiltration issue. I noticed with “Strings” command and a bit of research that the token.pickle is a local file that keeps some configurations information available for the SDK to use. Let’s to see what was inside token.pickle

rickys-MacBook-Pro:google sandman$ strings token.pickle ccopy_reg _reconstructor (cgoogle.oauth2.credentials Credentials c__builtin__ object Ntp3 (dp5 S'_token_uri' Vhttps://oauth2.googleapis.com/token sS'_client_secret' secret goes here sS'_id_token' NsS'token' some type of token goes here, but it's not a normal base64 or JWT token format... what is it? sS'_refresh_token' Oauth refresh token goes here? But not in the normal format? sS'_scopes' (lp16 S'https://www.googleapis.com/auth/drive' asS'_client_id' V328019499958-hlrc1foei7sea0l0787s1bt111t2s6b6.apps.googleusercontent.com sS'expiry' cdatetime datetime (S'\x07\xe3\x07\x1a\x10,\x03\x00\x91\x7f' tp23 Rp24

String alone will give you some tokens, however they don’t seem to follow a typical base64 encoded JWT token format. Go see for yourself. Maybe you can figure out what Google SDK is doing here on the token string. I did not have the hours in my day to commit. My thought was that this is some output of the pickling process. So let’s un-pickle and see what we get?

Here is the unpickling code …

import pickle

infile = open('token.pickle','rb')

new_dict = pickle.load(infile)

infile.close()

print(new_dict)

And what does it tell us?

rickys-MacBook-Pro:google sandman$ python unpickle.py <google.oauth2.credentials.Credentials object at 0x10bd26850>

Looks like a memory location to me… this is where my fun ended. The point is, with some smart security engineers, you’ll be able to develop a local forensics tool which searches for Google SDKs and parses the tokens then compares the key-value pairs from the tokens to some attributes from your account. Any key/value pair from the tokens that doesn’t match your Google account info is a sign you got a data exfiltration issue.