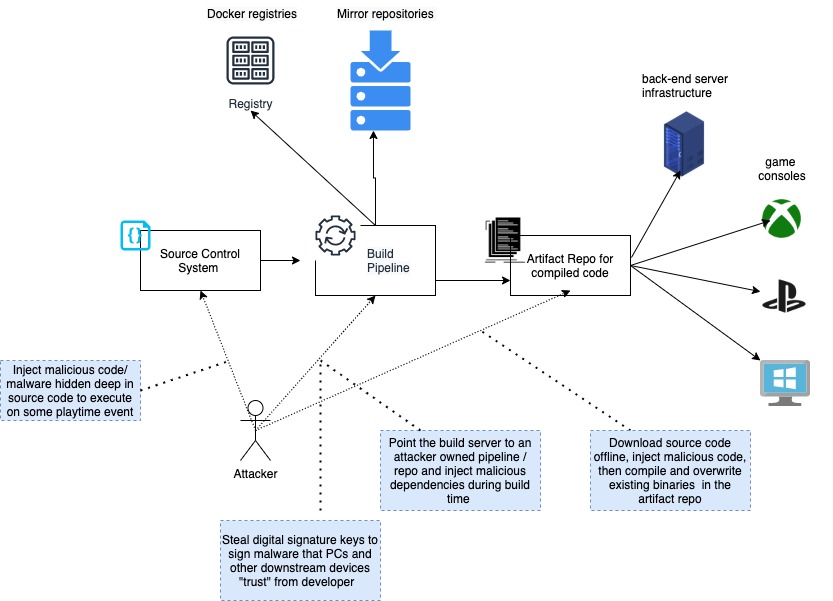

THE PROBLEM

This is #1 in a series to learn more about secure software CICD supply chains. This post and other will go beyond “Googling how to set it up” and instead focus on more nuanced security and operational issues.

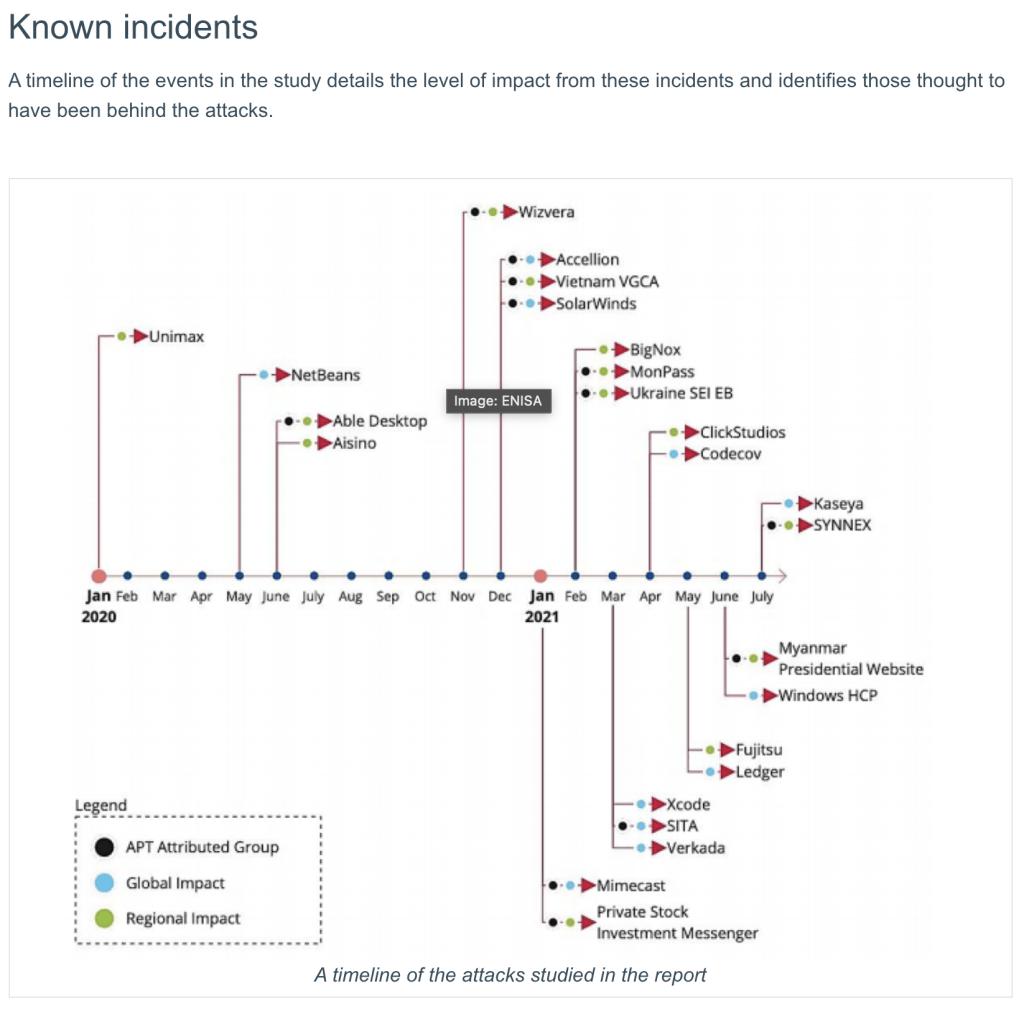

At the executive level, supply chains attacks like the SolarWinds incident recently saw attackers exploit known vulnerabilities in SolarWind’s IT software called Orion. Orion software was used to manage servers at various Fortune 500 companies, branches of the US government, threat response firm FireEye and Microsoft. The software was compromised and used malware to gain command and control on systems that installed and ran the Orion software.

In this lab, I’ll start with the initial attack concern of poisoning the upstream source code in the repository before the code goes through CICD and release. I’m focused on non-repudiation of code and ensuring it’s authentic. We’ll do this by using digitally signed git commits using a hard token that protects the private key material.

This article doesn’t cover exfiltration of source code or the build pipeline itself….

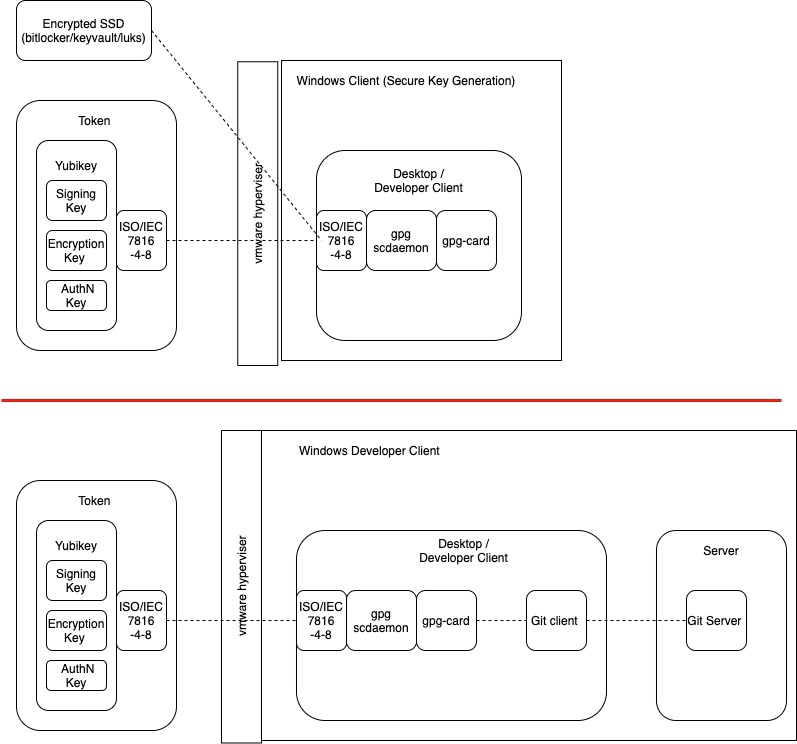

component architecture – poc

- ISO/IEC 7816-4, -8 compatible hard token. Yubikey 5 NFC is used. Yubikeys >4 supports GnuGPG and various other GPG libraries

- gpg-card and scdaemon as similarly described in the “Functional Specification of the OpenPGP application on ISO Smart Card Operating Systems“

- VMware fusion virtual machine (vmdk) – Windows 10 – Professional

- a.) An separate client for key generation and key back-up (avoid malware tampering)

- b.) As separate dev client for Git tests (where malware may exist)

- Running – Gpg4win – Windows compatible open source implementation of OpenGPG

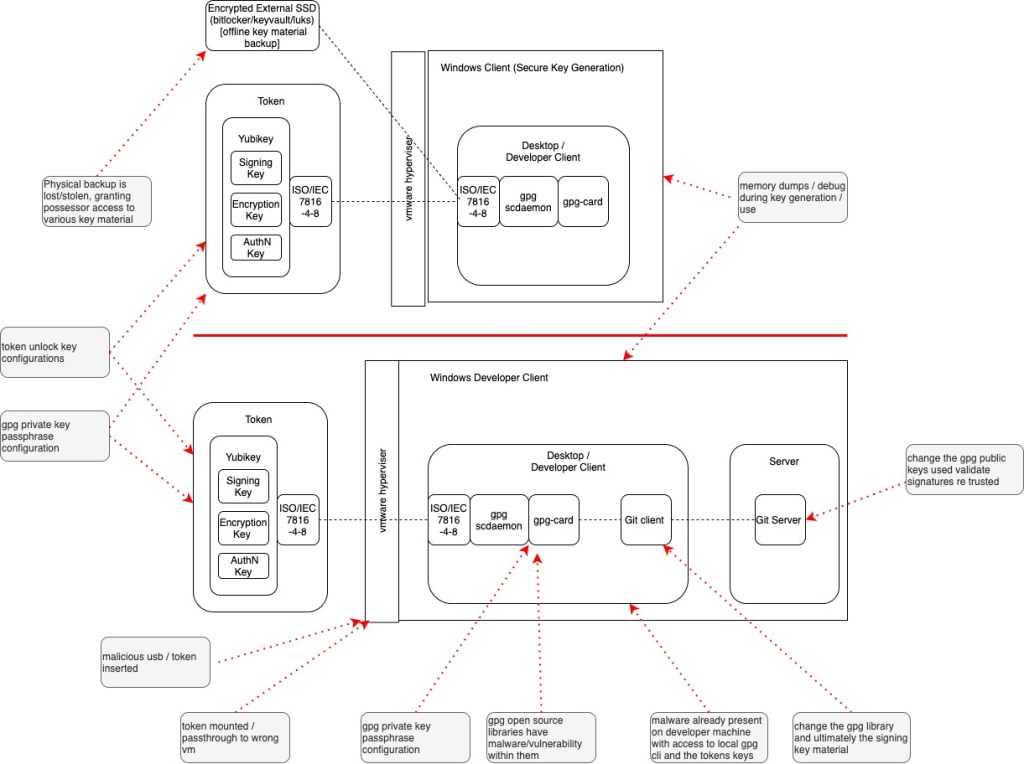

poc threat model

Attack Surface

- I’ve overlayed the physical and logical design of the system to map out the attack surface. This includes everything for the physical token and external hard-drive and the client side libraries and their programmable interfaces . etc. etc.

Vectors of Attack

- A few items that I expound on later in this the “Set Up” are ….

- Physical access to external devices like USB and SSD Drive

- Pre-existing malware on a development machine that has namespace user/access to the gpg path and hard token functions

- User misconfigurations when interacting with the Yubikey API

- User misconfigurations when interacting with the GPG API

- Git client misconfigurations

- etc.

Threats/Impacts

- Just using STRIDE as a quick benchmark or framework of reference

- [S] – Git commits –> Git CLI –> Amend a commit to spoof a code submissions

- [S] – Physical Access –> token –> Git CLI –> Spoof MAC code

- [T] – Git CLI – Tampering with the git global configurations

- [T] – GPG CLI – Tampering with key material via gpg card-edit, CRUD operations

- [R] – git cli – commit malicious /unsigned binaries and get merged into master branch

- [I] – Physical access –> token/external hd –> More likely to result in [I]Information disclosure

- [I] – Logical access –> operating system — memory –> More likely to result in [I]Information disclosure to key material

- [I] – Logical access –> operating system — gpg cli –> More likely to result in [I]Information disclosure to key material

- [D] –> GPG CLI –> Tampering with key material via gpg card-edit, CRUD operations result of loss of key material… meaning code cannot be checked-in correctly downstream

- [E] –> Elevation of privilege –> insecure gpg passphrase / yubikey unlock key misconfigurations result in access to SSH/AuthN keys to elevate privilege access

I’m not a stickler for any particular framework, I’m just using the above bullets to illustrate the thought processes and a few top of mind items. This is very superficial as it doesn’t even gig into the source code logic…

git functionality

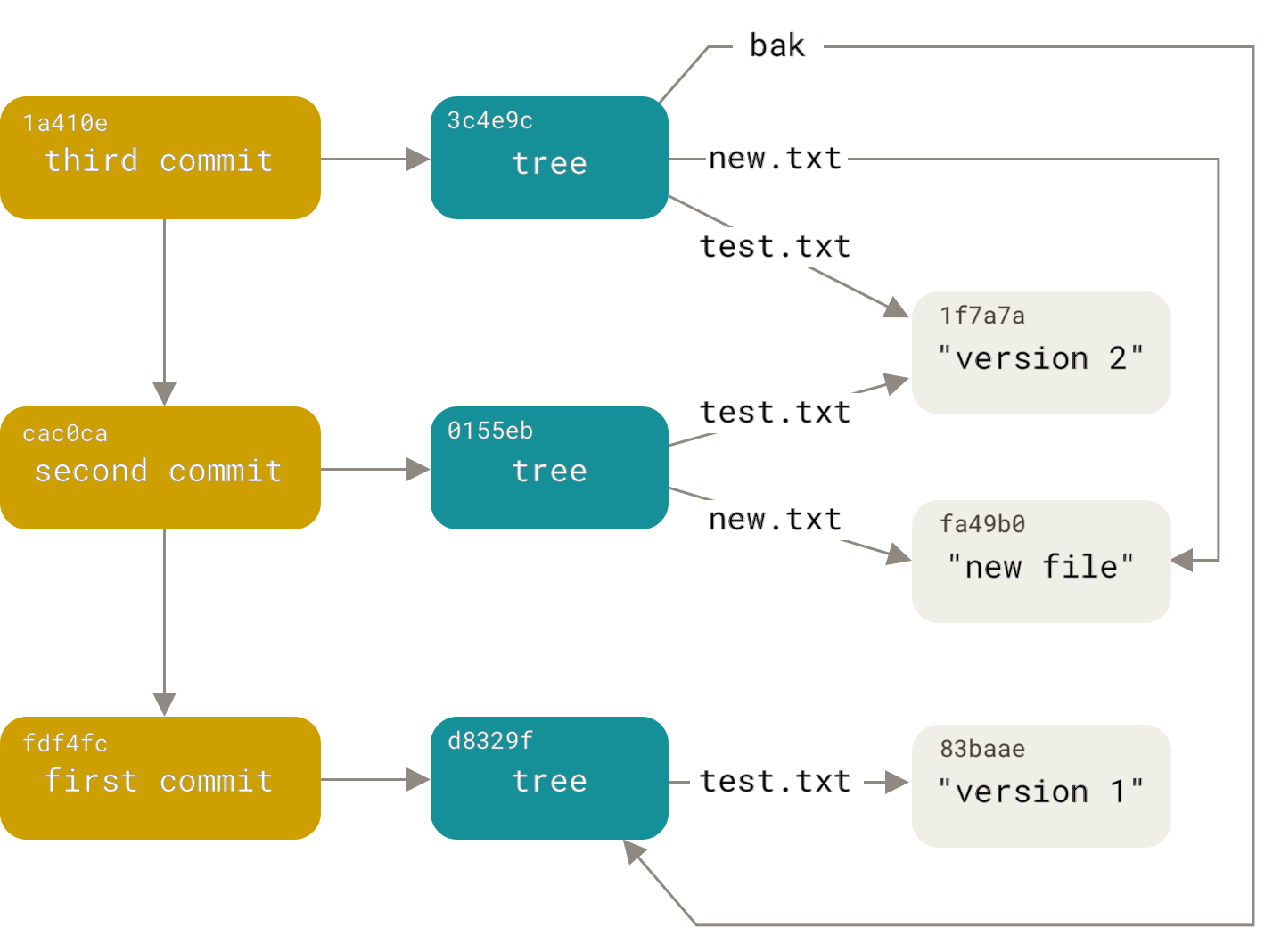

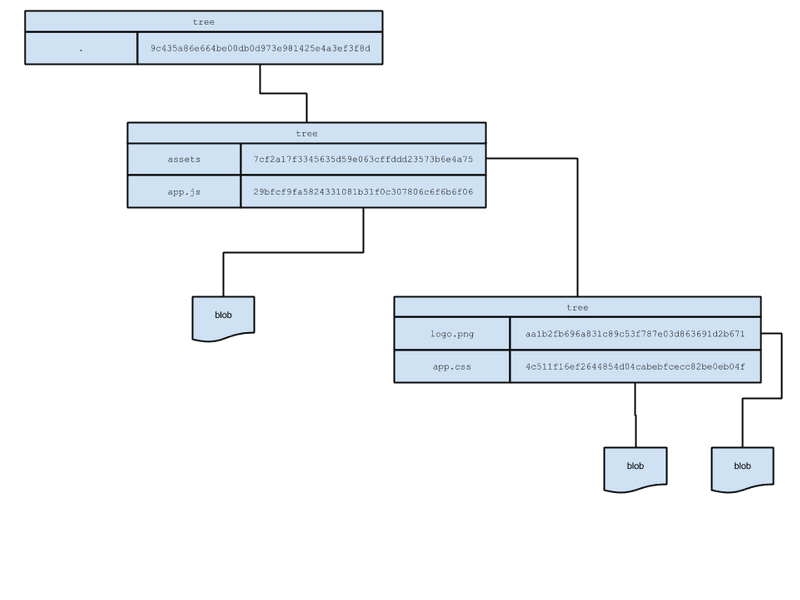

Git commit history and the commit hash

Here is a bit of textbook, but I found it insightful because later when considering source code poisoning attacks, the git file-system and hash functionality play into the broader design beyond the Ybuikey + Git signing.. for me the below text was worth reading and taking some notes ….

Git is a content-addressable filesystem which means that at the core of Git is a simple key-value data store. What this means is that you can insert any kind of content into a Git repository, for which Git will hand you back a unique key you can use later to retrieve that content. When you git commit you create new objects in the filesystem. The output from creating objects is a 40-character checksum hash. This is the SHA-1 hash — a checksum of the content you’re storing plus a header.

$ find .git/objects -type f

.git/objects/d6/70460b4b4aece5915caf5c68d12f560a9fe3e4

$ git cat-file -p d670460b4b4aece5915caf5c68d12f560a9fe3e4

test contentGit “trees” store content in a manner similar to a UNIX filesystem, but a bit simplified. All the content is stored as tree and blob objects, with trees corresponding to UNIX directory entries and blobs corresponding more or less to inodes or file contents. A single tree object contains one or more entries, each of which is the SHA-1 hash of a blob or subtree with its associated mode, type, and filename.

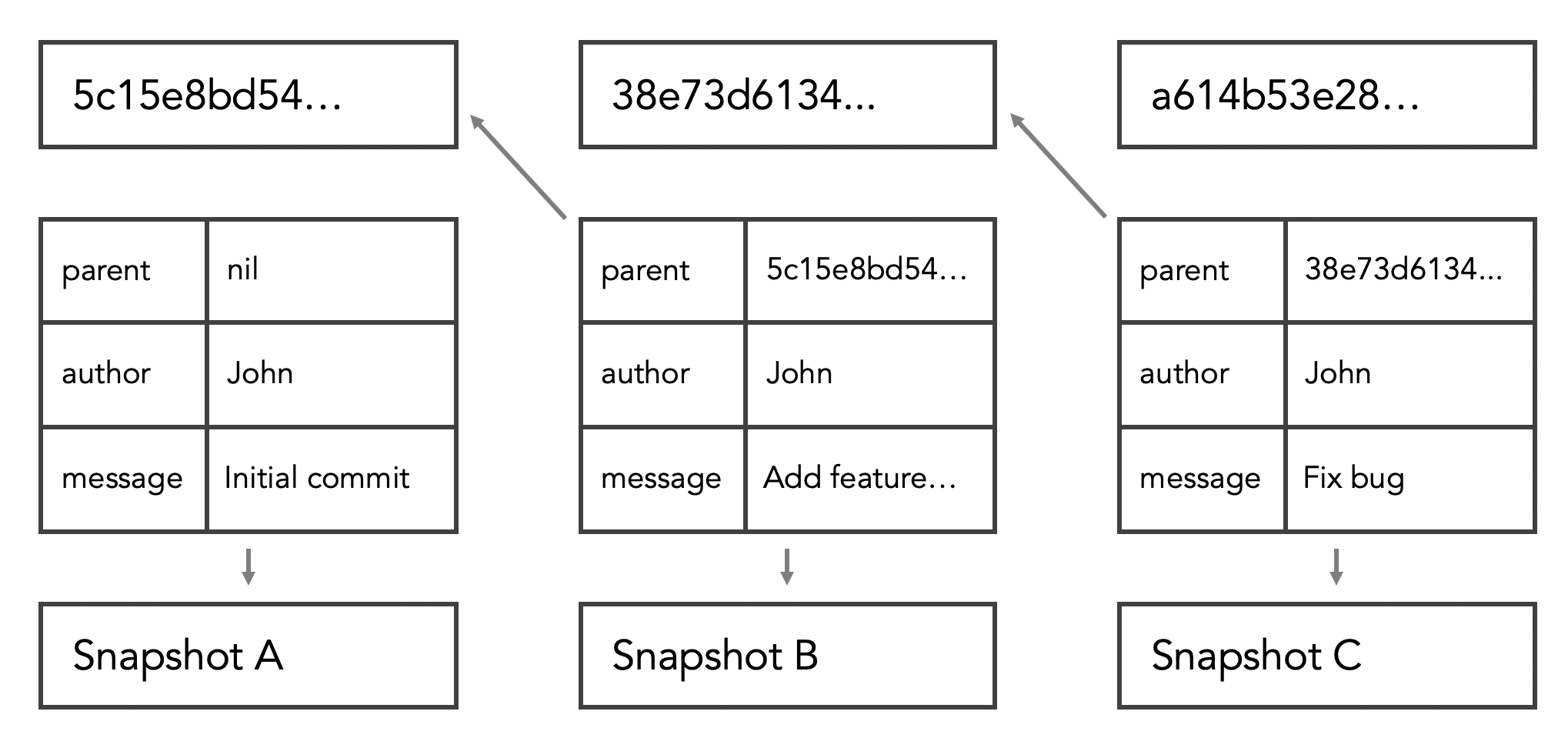

The more familiar object is the commit object: it specifies the top-level tree for the snapshot of the project at that point; the parent commits if any; the author/committer information (which uses your user.name and user.email configuration settings and a timestamp); a blank line, and then the commit message. When writing commit objects, each references the commit that came directly before it using part of the sha1 hash index.

$ echo 'First commit' | git commit-tree d8329f

fdf4fc3344e67ab068f836878b6c4951e3b15f3d

#Now you can look at your new commit object with git cat-file:

$ git cat-file -p fdf4fc3

tree d8329fc1cc938780ffdd9f94e0d364e0ea74f579

author Scott Chacon <foobar@gmail.com> 1243040974 -0700

committer Scott Chacon <foobar@gmail.com> 1243040974 -0700

First commit

$ echo 'Second commit' | git commit-tree 0155eb -p fdf4fc3

cac0cab538b970a37ea1e769cbbde608743bc96d

$ echo 'Third commit' | git commit-tree 3c4e9c -p cac0cab

1a410efbd13591db07496601ebc7a059dd55cfe9Git first constructs a header which starts by identifying the type of object — in this case, a blob. To that first part of the header, Git adds a space followed by the size in bytes of the content, and adding a final null byte. Git concatenates the header and the original content and then calculates the SHA-1 checksum of that new content.

As you may know, you can delete a file in staging and reconstruct it from the history in git. This is because each each git commit is essentially “linked” to each-other in the content-addressable filesystem. Meaning, that you can’t change the commit message or the commit author or the parent of the commit without also changing its SHA value.

A commit except the root is linked with its one or more parents.

git cat-file -p commit #or git log -1 --pretty=raw commit

We can see the parent field, which connects the commit with its parent(s). Also the tree field, which connects the commit with a tree object.

git ls-tree [commit|tree] #or git cat-file -p tree

This concept becomes more important in later posts/articles surrounding the security of a CICD processes. In this article, we explore non-repudiation of the “who”/”what” signed and committed the code and not the “integrity” but based on how the file system behaves and how the signatures method works, the integrity check and repudiation check are coupled together.

For example.. the signatures are computed (more on that later) with the file’s object hash-id, which are referenced to parent (trees) then used to create the tree’s hash and finally the middle tier tree’s hash are referenced to the top level directory to create an overarching hash…. meaning signatures may be chained together via previous commit hash indexes which are chained together….

If a hash changes in a previous commit then you’d expect the “trusted signature” not to match the “modified signatures” just as you’d expect the hashes to fail … this conclusion is based on the principal that the hash index’s are part of the input into the digital signature …

“Evaluating the source git source code shows that it’s the entire contents of the commit object is signed. The GPG signature data then take place in calculating the SHA-1 checksum for the commit to become the commit’s hash ID. See gpg-interface.c and commit.c, functions sign_buffer and do_sign_commit respectively. The tag signing is in builtin/tag.c (see function do_sign and its caller); signed tags have their signatures appended rather than inserted, but otherwise this works pretty much the same way”

sha1(

commit message => "initial commit"

committer => secSandman <secsandman@securitysandman.com>

commit date => Sat Nov 8 10:56:57 2014 +0100

author => Christoph Burgdorf <christoph.burgdorf@gmail.com>

author date => Sat Nov 8 10:56:57 2014 +0100

tree => 9c435a86e664be00db0d973e981425e4a3ef3f8d

parents => [0d973e9c4353ef3f8ddb98a86e664be001425e4a]

)Git Signature (Tags) Features

In this PoC we’ll be making use of the Git signature functionality.

Per Git references, if you have a GPG private key set up, you can now use it to sign new tags. All you have to do is use -s instead of -a:

$ git tag -s v1.5 -m 'my signed 1.5 tag'

You need a passphrase to unlock the secret key for

user: "secSandman <secsandman@securitysandman.com>"

2048-bit RSA key, ID 800430EB, created 2014-05-04Git Signature (Commits) Features

In more recent versions of Git (v1.7.9 and above), you can now also sign individual commits. If you’re interested in signing commits directly instead of just the tags, all you need to do is add a -S to your git commit command.

Notice the small (s) vs. the large (S) to delineate between tagging and git commit objects

$ git commit -a -S -m 'Signed commit'Additionally, you can configure git log to check any signatures it finds and list them in its output with the %G? format.

$ git log --pretty="format:%h %G? %aN %s"

5c3386c G secSandman Signed commit

In Git 1.8.3 and later, git merge and git pull can be told to inspect and reject when merging a commit that does not carry a trusted GPG signature with the --verify-signatures command.

If you use this option when merging a branch and it contains commits that are not signed and valid, the merge will not work.

$ git merge --verify-signatures non-verify

fatal: Commit ab06180 does not have a GPG signature.

You can also use the -S option with the git merge command to sign the resulting merge commit itself. The following example both verifies that every commit in the branch to be merged is signed and furthermore signs the resulting merge commit.

$ git merge --verify-signatures -S signed-branch

Other Git Signature considerations

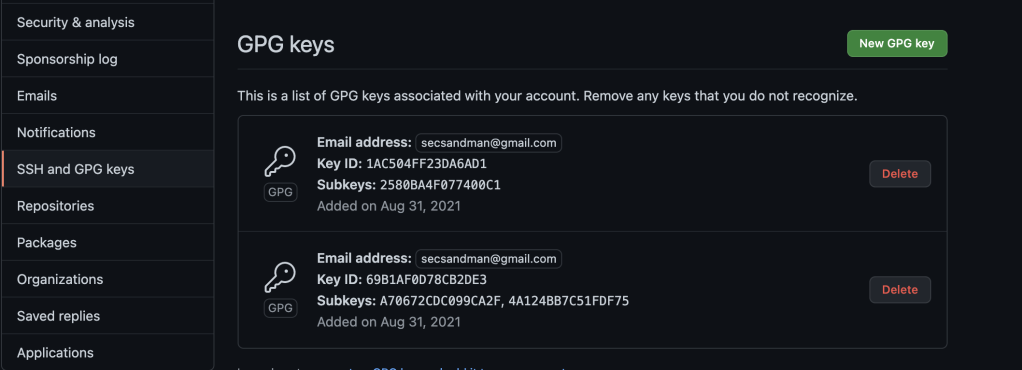

If using a commercial Git repository, they also offer an option to upload your GPG public keys for validation well. This can be further used to extend validation and create downstream CICD automation used on signed / validated requests.

- https://docs.gitlab.com/ee/user/project/repository/gpg_signed_commits/

- https://docs.github.com/en/github/authenticating-to-github/managing-commit-signature-verification

- Reject unsigned commits via push rules

- Merged request rules require signed commit

The point is that the downstream git servers products are beginning to adopt more capabilities that can be used in the development process. To the extent that the the SCM will reject merges and pushes altogether if you don’t have signed commits.

gpg functionaility

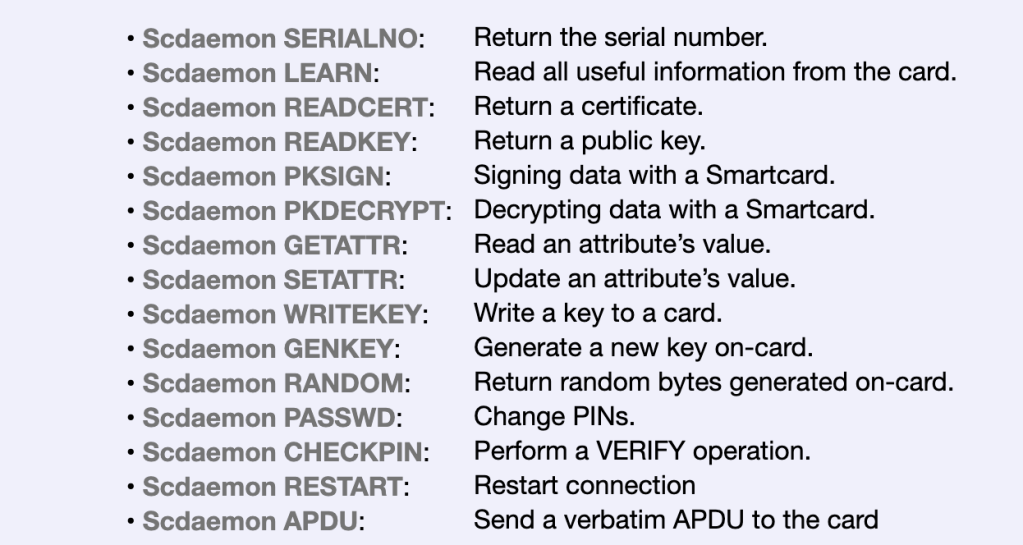

The primary focus for using GnuPG here, is for the programmable interface to the smart card. The primary sub-system is the scdaemon which is a daemon to manage smart cards. It is usually invoked by gpg-agent and in general not used directly.

As an end user, the majority of the interaction is with gpg-card. The gpg-card is used to administrate smart cards and USB tokens. It provides a superset of features from gpg –card-edit an can be considered a frontend to scdaemon which is a daemon started by gpg-agent to handle smart cards. The scdaemon MAN page provides the following as a reference for its application specifications and their relationship to ISO/IEC standards

The specifications for these cards are available at (http://g10code.com/docs/openpgp-card-1.0.pdf) and (http://g10code.com/docs/openpgp-card-2.0.pdf).

Secondarily, GPG is beings used for a reputable implementation of standard cryptographic operation to generate key material and certificates.

GbuPG also provides a decent RNG and does not rely directly on windows CryptoAPI.

What GnuPG does do is read a multitude of performance counters and system timers, check for # of bytes free in memory and on disk, and hash all the results together with the random_seed file. One could argue that these closed-source sources of data are just as suspect as CryptoAPI, but whatever. The basic RNG design appears to use a fast pool / slow pool scheme similar to that used in the very secure Yarrow RNG. See http://www.counterpane.com/yarrow.html for more details.

The command –generate-key will be used for key generation. This is the most flexible way of generating keys, but it is also the most complex one. The parameters for the key are either read from stdin or given as a file on the command line.

The passphrase feature also allows us to securely store our keys protected when written to disk outside of the smart-card. To help safeguard your key, GnuPG does not store the raw private key on disk. Instead it encrypts it using a symmetric encryption algorithm. That is why you need a passphrase to access the key. Thus there are two barriers an attacker must cross to access your private key: (1) he must actually acquire the key, and (2) he must get past the encryption.

Additionally, the subkey features and export features will be used when generating key material, creating sub signing keys and exporting key material to a secure back-up for disaster recovery purposes.

yubikey functionality

Interacting with the Yubikey

YubiKeys exposes itself to GPG via common interfaces like PKCS#11. PKCS #11 is the Oasis standard for cryptographic token interface base specification.

“The PKCS#11 standard specifies an application programming interface (API), called “Cryptoki,” for devices that hold cryptographic information and perform cryptographic functions. Cryptoki follows a simple object based approach, addressing the goals of technology independence (any kind of device) and resource sharing (multiple applications accessing multiple devices), presenting to applications a common, logical view of the device called a “cryptographic token”.

The Yubikey token itself can be managed via Yubikey CLI or the Yubikey graphical application. The Yubikey CLI is a Python 3.6 (or later) library and command line tool for configuring a YubiKey.

- https://support.yubico.com/hc/en-us/articles/360016614940-YubiKey-Manager-CLI-ykman-User-Manual

- https://developers.yubico.com/yubikey-manager-qt/

Authenticating to the Yubikey

The CLI and Application require that you protect your Yubikey administrative functions and keys with a PIN. There are two separate PINS for admin functions vs. key material access. If you haven’t set a User PIN or an Admin PIN for OpenPGP, the default values are 123456 and 12345678, respectively. Thse should be changed in the event you lose your Yubikey someone can easily google the Yubikey default PIN. and access your keys.

Entering the user PIN incorrectly three times will cause the PIN to become blocked; it can be unblocked with either the Admin PIN or Reset Code.

Entering the Admin PIN or Reset Code incorrectly three times destroys all GPG data on the card. The Yubikey will have to be reconfigured.

| Name | Default Value | Use |

|---|---|---|

| PIN | 123456 | decrypt and authenticate (SSH) |

| Admin PIN | 12345678 | reset PIN, change Reset Code, add keys and owner information |

| Reset code | None | reset PIN (more information) |

Generating Keys

Another feature to consider, is whether your GPG key are generated “outside” of your Yubikey or “within” your Yubikey. Warning: Generating the PGP on the YubiKey ensures that malware can never steal your PGP private key, but it means that the key can not be backed up so if your YubiKey is lost or damaged the PGP key is irrecoverable.

Yubikey touch

From Yubikey >4, a new touch feature was introduced to protect the use of the private keys with an additional layer. When this functionality enabled, the result of a cryptographic operation involving a private key (signature, decryption or authentication) is released only if the correct user PIN is provided and the YubiKey touch sensor is triggered. This request of user presence ensures that no malware can issue commands to the YubiKey without the user noticing.

By default, YubiKey will perform encryption, signing and authentication operations without requiring any action from the user, after the key is plugged in and first unlocked with the PIN.

To require a touch for each key operation, install YubiKey Manager and recall the Admin PIN. Older versions of YubiKey Manager use touch instead of set-touch in the following commands.

setting up poc

Setting up an Environment

In my case, I used VMware two windows machines that I already had running and installed. One “dirty” and the other “new” was used to generate the keys. However, there is consensus across the various “how-to” articles that you should not perform your key generation on you “every-day” machine. This is because you may already have malware or you may be on a machine managed/accessed by many others. Here’s a decent guide I found that ranks each options from highest risk to lowest risk.

- Daily-use operating system

- Virtual machine on daily-use host OS (using virt-manager, VirtualBox, or VMWare)

- Separate hardened Debian or OpenBSD installation which can be dual booted

- Live image, such as Debian Live or Tails

- Secure hardware/firmware (Coreboot, Intel ME removed)

- Dedicated air-gapped system with no networking capabilities

If you chose to use a a local VM for the PoC …..

Ensure Yubikey can passthrough into the virtual machine. Although I used VMware, I assume other products may have a similar problem that you’ll need to research.

- Shut down the virtual machine.

- Locate the VM’s .vmx configuration file. “Right Click” + ALT/CTL to toggle. A new options will appear to edit the .vmx file

- Open the configuration file with a text editor.

- Add the two lines below to the file and save it.

At this point, a non-shared YubiKey or Security Key should be available for passthrough. If not, you may need to manually specify the USB vendor ID and product ID in the configuration file as well. The example below applies to a YubiKey 4 or 5 with all its modes enabled. For more information, please see YubiKey USB ID Values.

usb.generic.allowHID = "TRUE"

usb.generic.allowLastHID = "TRUE"

usb.quirks.device0 = "0x1050:0x0407 allow"Next step is to download the GnuPG compatible library onto your environement. This may vary based on your operaitng system. In my case, I used the w=Windows compatible gpg4win from here. There are other official GnuPG supported download options that come from GnuPG source directly. WARNING: GnuPG binary releases are not directly managed or supported by the GnuPG team and much like the point of this article are subject to potential supply chain issues. However, you can find source here for yourself.

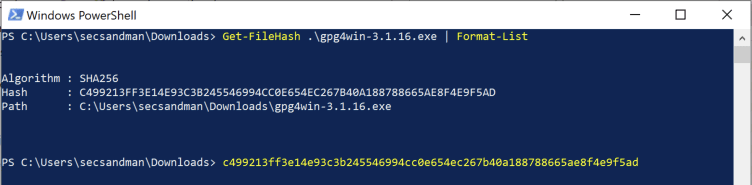

Validate the hash in PowerShell before installing …

Validate the binary is signed and trusted by Microsoft

At this point, it does not mean the binary is “from” who we think it is because we do not have the public PGP certificate, nor could I find it’s key server …. it does mean the binary is signed using a trusted signing certificate however …

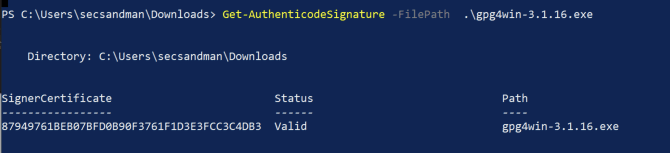

I tried to remove as much additional “bloat” as possible. The installer would not allow be to remove “Kleopatra” which is an GUI interface.

You may need the installation path later to put into your PATH

Generating the Keys

The recommended approach is to generate a master key and then sub keys. This is largely because the master can can be used for cross-signing and generating revocations certs in the event the keys are lost.

The “Master key” is your “C” key (certify). It is the key used to sign other people’s keys and your own subkeys and identities. If any of your E or S subkeys are lost or you’re worried they got exposed to malware, you can simply revoke them and create new ones as long as you trust the integrity of your C key. This is why it is recommended that your C key (“master key”) is carefully safeguarded and stored offline.

At the end, your goal is to have the following structure:

- Existing [SC] key — stored offline, removed from local disk

- Existing [E] key — kept on disk / token

- New [S] key — kept on disk / token

Final note — by default, GnuPG creates your Master key as [SC], but it doesn’t have to. You can specifically tell GnuPG to create a standalone [C] key.

The first key to generate is the master key. It will be used for certification only: to issue sub-keys that are used for encryption, signing and authentication.

Important The master key should be kept offline at all times and only accessed to revoke or issue new sub-keys. Keys can also be generated on the YubiKey itself to ensure no other copies exist.

You’ll be prompted to enter and verify a passphrase – keep it handy as you’ll need it multiple times later.

Generate a strong passphrase which could be written down in a secure place or memorized:

gpg --expert --full-generate-key

Please select what kind of key you want:

(1) RSA and RSA (default)

(2) DSA and Elgamal

(3) DSA (sign only)

(4) RSA (sign only)

(7) DSA (set your own capabilities)

(8) RSA (set your own capabilities)

(9) ECC and ECC

(10) ECC (sign only)

(11) ECC (set your own capabilities)

(13) Existing key

Your selection? 8

Possible actions for a RSA key: Sign Certify Encrypt Authenticate

Current allowed actions: Sign Certify Encrypt

(S) Toggle the sign capability

(E) Toggle the encrypt capability

(A) Toggle the authenticate capability

(Q) Finished

Your selection? E

Possible actions for a RSA key: Sign Certify Encrypt Authenticate

Current allowed actions: Sign Certify

(S) Toggle the sign capability

(E) Toggle the encrypt capability

(A) Toggle the authenticate capability

(Q) Finished

Your selection? S

Possible actions for a RSA key: Sign Certify Encrypt Authenticate

Current allowed actions: Certify

(S) Toggle the sign capability

(E) Toggle the encrypt capability

(A) Toggle the authenticate capability

(Q) Finished

Your selection? Q

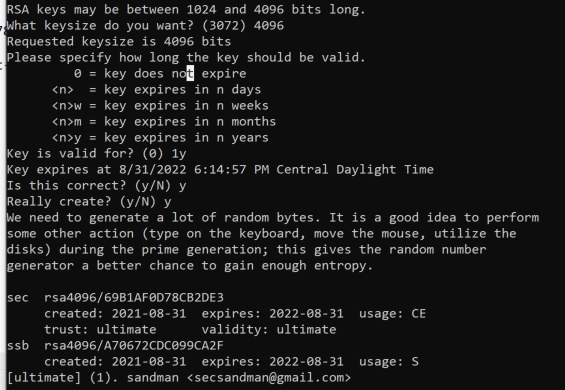

RSA keys may be between 1024 and 4096 bits long.

What keysize do you want? (2048) 4096

Requested keysize is 4096 bits

Please specify how long the key should be valid.

0 = key does not expire

<n> = key expires in n days

<n>w = key expires in n weeks

<n>m = key expires in n months

<n>y = key expires in n years

Key is valid for? (0) 0

Key does not expire at all

Is this correct? (y/N) y

Export the key ID as a variable (KEYID) for use later:

SET KEYID=69B1AF0D78CB2DE3

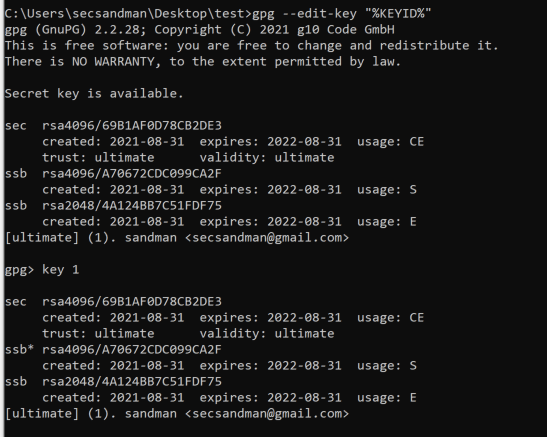

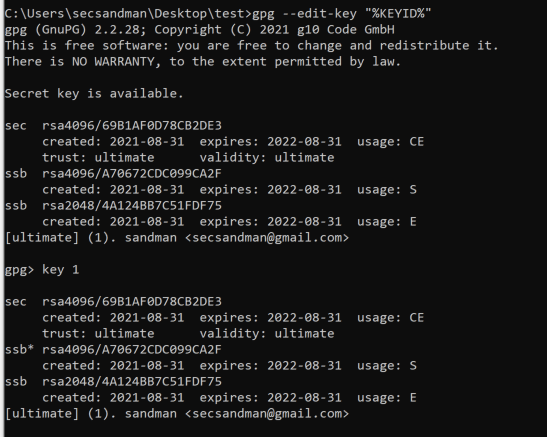

Edit the master key to create new sub key. The sub key will be what is loaded into Yubikey and used for signing the GIT requests.

gpg --expert --edit-key "%KEYID%"

sign key

sec rsa4096/69B1AF0D78CB2DE3

created: 2021-08-31 expires: 2022-08-31 usage: CE

trust: ultimate validity: ultimate

ssb rsa4096/A70672CDC099CA2F

created: 2021-08-31 expires: 2022-08-31 usage: Sgpg > addkey- Use 4096-bit RSA keys.

- Use a 1 year expiration for sub-keys – they can be renewed using the offline master key

- Create a signing key by selecting

addkeythen(4) RSA (sign only):

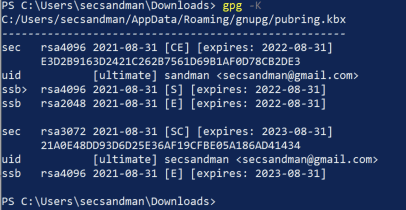

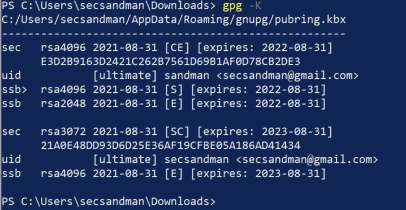

List the generated secret keys and verify the output:

$ gpg -K /tmp.FLZC0xcM/pubring.kbx

Save a copy of your keys: Remember that these should be stored securely offline on an encrypted device. The master key and sub-keys will be encrypted with your passphrase when exported.

Master Keys

C:\Users\secsandman\Desktop\test>gpg --export-secret-keys --armor 69B1AF0D78CB2DE3 > secret-master-key.gpg

C:\Users\secsandman\Desktop\test>gpg --export-secret-subkeys --armor 69B1AF0D78CB2DE3 > secret-sub-key.gpg

Public Keys

C:\Users\secsandman\Desktop\test>gpg --export --armor 69B1AF0D78CB2DE3 > pub-sub-key.gpg

Important Without the public key, you will not be able to use GPG to encrypt, decrypt, nor sign messages. However, you will still be able to use YubiKey for SSH authentication. When you import the private Key into Yubikey you may use the public key during the process, but need it later depending on your use-case. (You will need it for the Git server set-up)

Revocation certificate

Although we will backup and store the master key in a safe place, it is best practice to never rule out the possibility of losing it or having the backup fail. Without the master key, it will be impossible to renew or rotate subkeys or generate a revocation certificate, the PGP identity will be useless.

Even worse, we cannot advertise this fact in any way to those that are using our keys. It is reasonable to assume this will occur at some point and the only remaining way to deprecate orphaned keys is a revocation certificate.

To create the revocation certificate:

gpg --output revoke.asc --gen-revoke "%KEYID%"

Back up key material (Optional)

YubiKey does not allow export of the private key, just the public cert. Once keys are moved to YubiKey, they cannot be moved again! This means you want to create an encrypted backup of the keyring and consider using a paper copy of the keys as an additional backup measure. Then put your back-up and pass-phrases in a secure safe and password vault.

The exact steps to this process vary by operating system, so I’ll just reference the windows “Bitlocker For Dummies”

If your on Linux, may want to perform the following ….

$ sudo dmesg | tail

$ sudo dd if=/dev/urandom of=/dev/mmcblk0 bs=4M status=progress

$ sudo fdisk /dev/mmcblk0

$ sudo fdisk /dev/mmcblk0

$ sudo cryptsetup luksFormat /dev/mmcblk0p1

$ sudo cryptsetup luksOpen /dev/mmcblk0p1 secret

$ sudo mkfs.ext2 /dev/mapper/secret -L gpg-$(date +%F)

Mount the filesystem and copy the windows directory with the keys you exported:

$ sudo mkdir /mnt/encrypted-storage

$ sudo mount /dev/mapper/secret /mnt/encrypted-storage

$ sudo cp -avi $GNUPGHOME /mnt/encrypted-storage/

Configure and Transferring Keys to Token

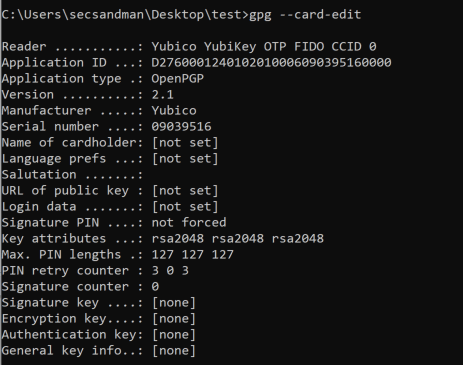

Next we’ll want to configure the smart-card and transfer the keys into the token …notice that you do not have a signature key set up

gpg --card-edit

C:\Users\secsandman\Desktop\test>gpg --card-edit

Reader ...........: Yubico YubiKey OTP FIDO CCID 0

Application ID ...: D2760001240102010006090395160000

Application type .: OpenPGP

Version ..........: 2.1

Manufacturer .....: Yubico

Serial number ....: 09039516

Name of cardholder: [not set]

Language prefs ...: [not set]

Salutation .......:

URL of public key : [not set]

Login data .......: [not set]

Signature PIN ....: not forced

Key attributes ...: rsa2048 rsa2048 rsa2048

Max. PIN lengths .: 127 127 127

PIN retry counter : 3 0 3

Signature counter : 0

Signature key ....: [none]

Encryption key....: [none]

Authentication key: [none]

General key info..: [none]

Change PIN

The GPG interface is separate from other modules on a Yubikey such as the PIV interface. The GPG interface has its own PIN, Admin PIN, and Reset Code – these should be changed from default values!

Entering the user PIN incorrectly three times will cause the PIN to become blocked; it can be unblocked with either the Admin PIN or Reset Code.

Entering the Admin PIN or Reset Code incorrectly three times destroys all GPG data on the card. The Yubikey will have to be reconfigured.

gpg/card> admin

Admin commands are allowed

gpg/card> passwd

gpg: OpenPGP card no. D2760001240102010006055532110000 detected

1 - change PIN

2 - unblock PIN

3 - change Admin PIN

4 - set the Reset Code

Q - quit

Your selection? 3

PIN changed.

1 - change PIN

2 - unblock PIN

3 - change Admin PIN

4 - set the Reset Code

Q - quit

Your selection? 1

PIN changed.

1 - change PIN

2 - unblock PIN

3 - change Admin PIN

4 - set the Reset Code

Q - quit

Your selection? q

Transferring Keys

Important Transferring keys to YubiKey using keytocard is a destructive, one-way operation only. Make sure you’ve made a backup before proceeding: keytocard converts the local, on-disk key into a stub, which means the on-disk copy is no longer usable to transfer to subsequent security key devices or mint additional keys.

You will be prompted for the master key passphrase and Admin PIN.

Select and transfer the signature key as indicated by [S]

Verify the key[s] were tranferred correctly

The > indicates the STUB for the transferred key, this is normally a good sign that the procedure was successful

Support

- https://support.yubico.com/hc/en-us/articles/360013714479-Troubleshooting-Issues-with-GPG

- https://stackoverflow.com/questions/5587513/how-to-export-private-secret-asc-key-to-decrypt-gpg-files

- https://developers.yubico.com/yubikey-manager-qt/

- https://support.yubico.com/hc/en-us/articles/360016614940-YubiKey-Manager-CLI-ykman-User-Manual

- https://docs.yubico.com/software/yubikey/tools/ykman/Using_the_ykman_GUI.html

Git Configuration

On your client ….

git config --global commit.gpgsign trueYou’ll need the stub > keyid from the sub-key in your git configuration

C:\Users\secsandman\Desktop\test>gpg --list-secret-keys --keyid-format LONG

git config --global user.signingkey A70672CDC099CA2FYou may need to change the path Git uses to the correct GPG path as well

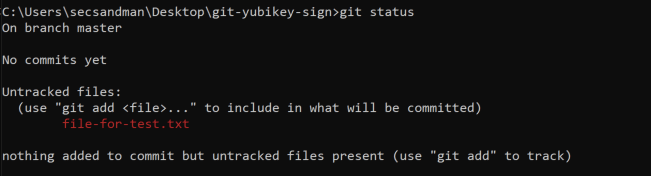

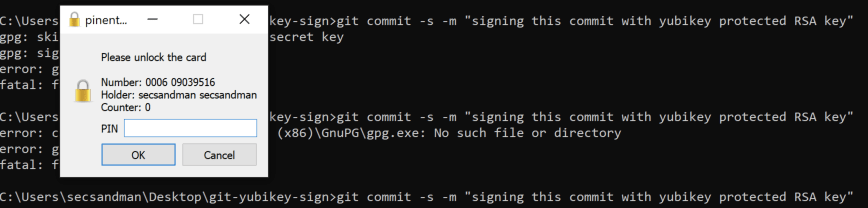

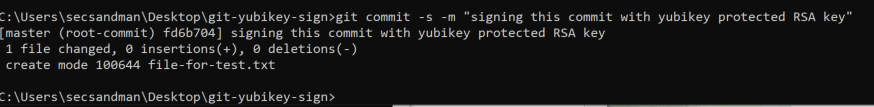

git config --global gpg.program "C:\Program Files (x86)\GnuPG\bin\gpg.exe"Testing a Yubikey signed Commit

Afterwards you should be ready to sign your first commit …

You can also, upload the public certificate from your Yubikey signing sub-key, we backed up this public cert in a previous step to Github/Gitlab ….

C:\Users\secsandman\Desktop\test>gpg --export --armor 69B1AF0D78CB2DE3 > pub-sub-key.gpg

Push origin master with your signed commit ….

Support

- https://git-scm.com/book/en/v2/Git-Tools-Signing-Your-Work

- https://docs.gitlab.com/ee/user/project/repository/gpg_signed_commits/

- https://docs.github.com/en/github/authenticating-to-github/managing-commit-signature-verification

- Reject unsigned commits via push rules

- Merged request rules require signed commit

operational architecture (in-practice)

As I went on this research / PoC journey, I learned a lot more about the feasibility and operational impact of implementing such as security control. Let’s focus on some of the basics that scaling this solution would require at a medium to large company.

Basic Operational Considerations

- You would need a Yubikey onboarding and replacement distribution mechanisms

- You would need a way to revoke the Yubikey and/or it’s keys rendering them useless in your source control system and other systems (This may be achieved in a few ways)

- You would need to purchase/replacement process for the backup storage – USB/Hard-drives or trust the back-up keys in a software key vault protected with some form of soft token MFA

- You may need a software key vault for the pass-phrases used on the back-up storage and token itself

Broader Development Considerations

The below article is really gold… I’ll copy a few key points over from it but I highly suggest anyone who made it through this article to read this one too….

Mike explains that the process that we’ve done up until now may cover small commit and patches but it fails to consider the a large merge requests with hundreds of commits and larger volume of code being introduced back into the main branch.

Here is an excerpt, but absolutely key in an broader design decision…

- Request that the user squash all the commits into a single commit, thereby avoiding the problem entirely by applying the previously discussed methods. I personally dislike this option for a few reasons:

- We can no longer follow the history of that feature/bugfix in order to learn how it was developed or see alternative solutions that were attempted but later replaced.

- It renders

git bisectuseless. If we find a bug in the software that was introduced by a single patch consisting of 300 squashed commits, we are left to dig through the code and debug ourselves, rather than having Git possibly figure out the problem for us.

- Adopt a security policy that requires signing only the merge commit (forcing a merge commit to be created with

--no-ffif needed).- This is certainly the quickest solution, allowing a reviewer to sign the merge after having reviewed the diff in its entirety.

- However, it leaves individual commits open to exploitation. For example, one commit may introduce a payload that a future commit removes, thereby hiding it from the overall diff, but introducing terrible effect should the commit be checked out individually (e.g. by

git bisect). Squashing all commits (option #1), signing each commit individually (option #3), or simply reviewing each commit individually before performing the merge (without signing each individual commit) would prevent this problem. - This also does not fully prevent the situation mentioned in the hypothetical story at the beginning of this article—others can still commit with you as the author, but the commit would not have been signed.

- Preserves the SHA-1 hashes of each individual commit.

- Sign each commit to be introduced by the merge.

- The tedium of this chore can be greatly reduced by using http://www.gnupg.org/documentation/manuals/gnupg/Invoking-GPG_002dAGENT.html%5B

gpg-agent]. - Be sure to carefully review each commit rather than the entire diff to ensure that no malicious commits sneak into the history (see bullets for option #2). If you instead decide to script the sign of each commit without reviewing each individual diff, you may as well go with option #2.

- Also useful if one needs to cherry-pick individual commits, since that would result in all commits having been signed.

- One may argue that this option is unnecessarily redundant, considering that one can simply review the individual commits without signing them, then simply sign the merge commit to signify that all commits have been reviewed (option #2). The important point to note here is that this option offers proof that each commit was reviewed (unless it is automated).

- This will create a new for each (the SHA-1 hash is not preserved).

- The tedium of this chore can be greatly reduced by using http://www.gnupg.org/documentation/manuals/gnupg/Invoking-GPG_002dAGENT.html%5B

My take-away, is that although we can implement signing now technically, “how” you implement signing into the development and code review process will ultimately affect how effective and trustworthy the signing technology actually is in practice.

Which begs the question ….

- If we can sign, should we …

- If we should, then where and on what …

- How often ….

- and by whom …